How to Decline an Analyst Evaluation

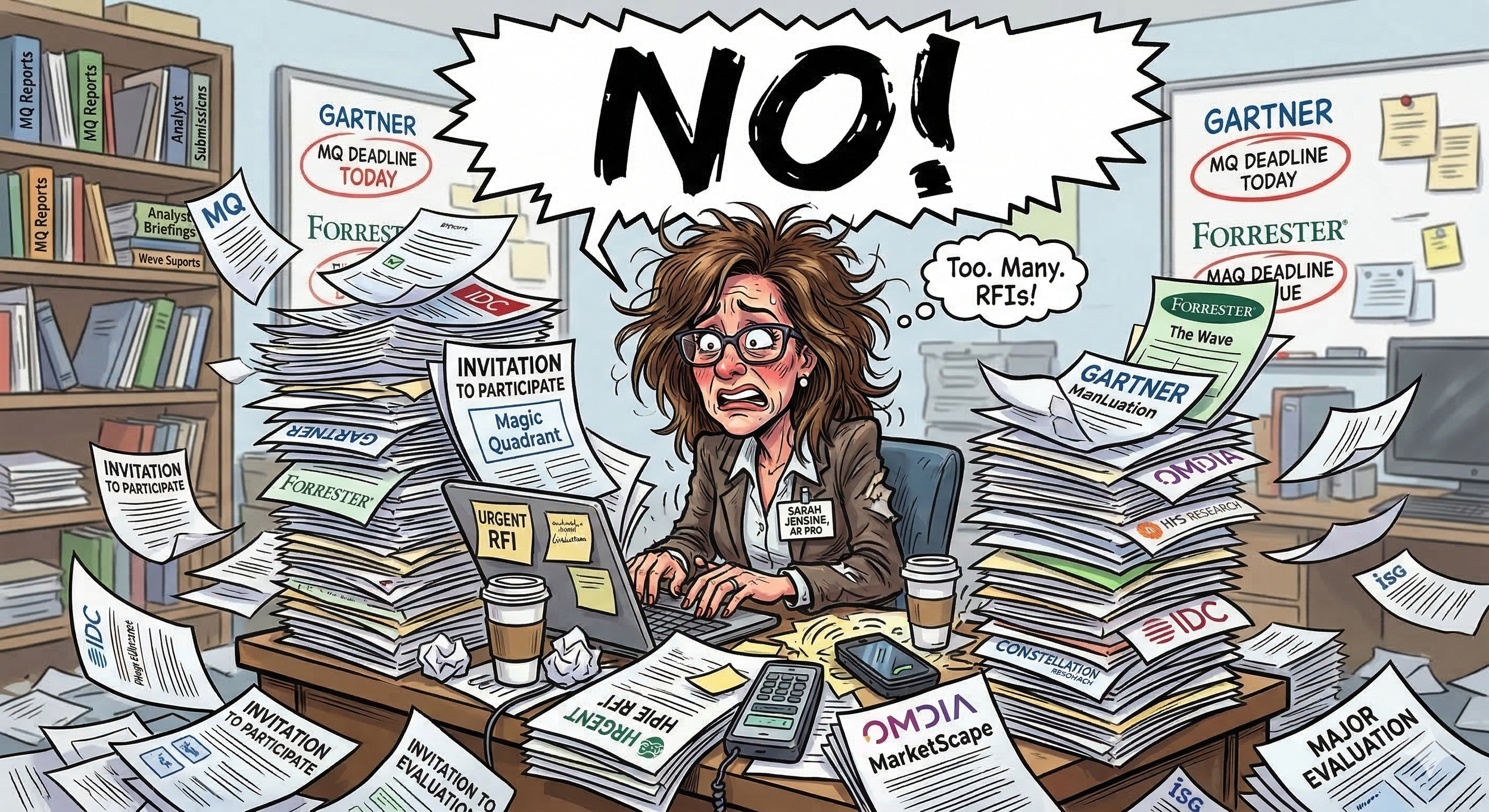

An Analyst Relations Professional drowns in a sea of Major Evaluation Requests - did anyone consider whether we should actually do these?

Here’s your practical guide to saying no and minimizing risk…

The invitations are starting to feel like a deluge. Forrester wants you in the Wave. IDC is launching a new Marketscape. Gartner has added another category that your product technically fits. And somewhere in your company, a well-meaning executive forwards each one with "thoughts?" Rough translation: "we should probably do this."

But should we?

Because at some point this math stops working. Major evaluations involve significant cost and, in some cases, risk as well. Especially if you lack the resources to support them properly. And yet I watch companies participate out of muscle memory - long past the point where it makes strategic sense - simply because no one has built the case to stop.

Here's how you build that case.

Step 1 - Understand what "no" actually means for each specific firm, because the consequences of non-participation vary more than most people realize and not all firms are transparent about them. Gartner may include you anyway and score you lower in your absence. Forrester has a concept called full participation; if you don't meet that threshold, you don't receive the scorecard review, which is arguably the most valuable part of the process. IDC tends to be more negotiable. Some firms document these rules clearly. Others don't, which means you're relying on relationships and institutional knowledge to understand what you're actually opting out of. Start here, because you can't make an intelligent decision without it.

Step 2 - Understand whether there's a minimum viable version of showing up. Opting out and doing the bare minimum are different decisions, and it's worth knowing where that floor is before you conclude the floor is still too high. Forrester's full participation threshold is a good example of this — it's a real stopping point, not just a halfway measure. Know what the minimum looks like for each firm before you decide whether to exit entirely.

Step 3 - Assess risk in both directions, and most companies only do half of it. They think carefully about the risks of not participating — will buyers still find us, will the report still drive front-of-funnel activity, will analysts still take calls about us from prospective customers — and those are important questions. It's also worth noting that the report and analyst inquiry are related but distinct. A buyer who uses a Wave to build a shortlist is different from a buyer who calls an analyst three months into an evaluation to ask whether you're the right choice. Both matter, and they answer different questions about your exposure if you step back.

But the other direction matters just as much. Participation itself carries risk that tends to get underweighted - especially with these firms telling anyone who will listen that "being included at all is the most important thing!." Hard disagree! Having lived in sales, I assure you it's much easier to explain why you weren't included than why you were but did badly. Partial fits where the evaluation criteria are weighted toward capabilities or use cases that aren't your strengths make this conversation especially critical.

Step 4 - Once you understand the risk landscape, model the worst case — and do it honestly in both directions. If you step back and your strongest competitor doubles down and earns Leader, what actually happens to your pipeline? Sit with the FOMO of that scenario. Bring it with you to Step 6, transparently and directly. That's the honest internal conversation that needs to happen here.

Step 5 - Document the true cost of participation. We don't do this math, and we need to. Maybe you think about the advisory time with this specific analyst, or your subscription - budget line items. But these things take a MASSIVE amount of time to do correctly. Time from expensive people who have revenue-driving tasks to complete. Product managers, responding to RFIs, executives prepping for briefings, sales engineers building a custom demo. Add it up, and add it into this analysis. It's hundreds of hours.

Step 6 - Align your company-wide decision. A truly strategic AR leader surfaces the full picture (benefits, risks, costs, competitive exposure) in the right room. In this case that room is the C-Suite. The CMO or the head of AR shouldn't be making this call alone and then explaining it later. Every executive stakeholder whose team is affected needs to look at the analysis, understand the potential consequences, and explicitly agree. Then document the decision.

The reason for that last part isn't bureaucratic. It's that analyst evaluations have a way of mattering intensely to people when results go sideways and mattering very little when someone has to justify the time investment. You want a record that shows this was a deliberate strategic choice made with full information, not an oversight or a resource failure. That record protects everyone in the room — including the person who built the case.

Communicating your decision back to the firm

If you've done this work and the answer is no, you need to communicate it directly and without unnecessary explanation. If there's a definitional offramp (e.g. your ICP has narrowed, your roadmap is moving in a direction that doesn't fit the current evaluation scope) use it. It's clean, it's honest, and it doesn't require anyone to feel deprioritized.

If you don't have that option, a standards argument travels better than a resource one. Something in the spirit of: we've committed to only participating in evaluations where we can do it properly, and we can't make that commitment this cycle. That's a values statement, not a complaint, and it leaves the relationship intact.

What this conversation might unlock

You may also find that putting all of this on paper — the risks in both directions, the true cost, the competitive exposure — reignites a genuine willingness to resource the exercise appropriately. That happens more than you'd think. Sometimes the problem isn't that participation doesn't make sense. It's that no one has ever forced the honest conversation about what participation actually requires.

Either outcome is a better place to be than the alternative: enrolled in six evaluations, under-resourced for all of them, and hoping no one notices.

—

Up, and to the right!

Elena